Hi there,

This week felt less like “wow, another model” and more like a preview of what the next layer of the stack is going to look like. The interesting shift was not just raw capability, but whether models are cheap enough to become dependable workhorses, whether agents can improve from day-to-day usage, and whether the software around them is finally starting to feel like an actual operating environment instead of a terminal tab and a prayer.

📃 In this Monday Morning Mashup:

⭐Highlight: Nemotron 3 Super looks more important as an agent workhorse than as a benchmark trophy

🔧Tools: OpenClaw-RL turns normal conversations into training signal

🌐Web: Star Office UI makes agent work visible instead of invisible

🤖AI:

autoresearchhands a small training loop to an agent and lets it iterate overnight

Have a great week!

⭐Highlight: Nemotron 3 Super looks built for throughput, not just leaderboard arguments

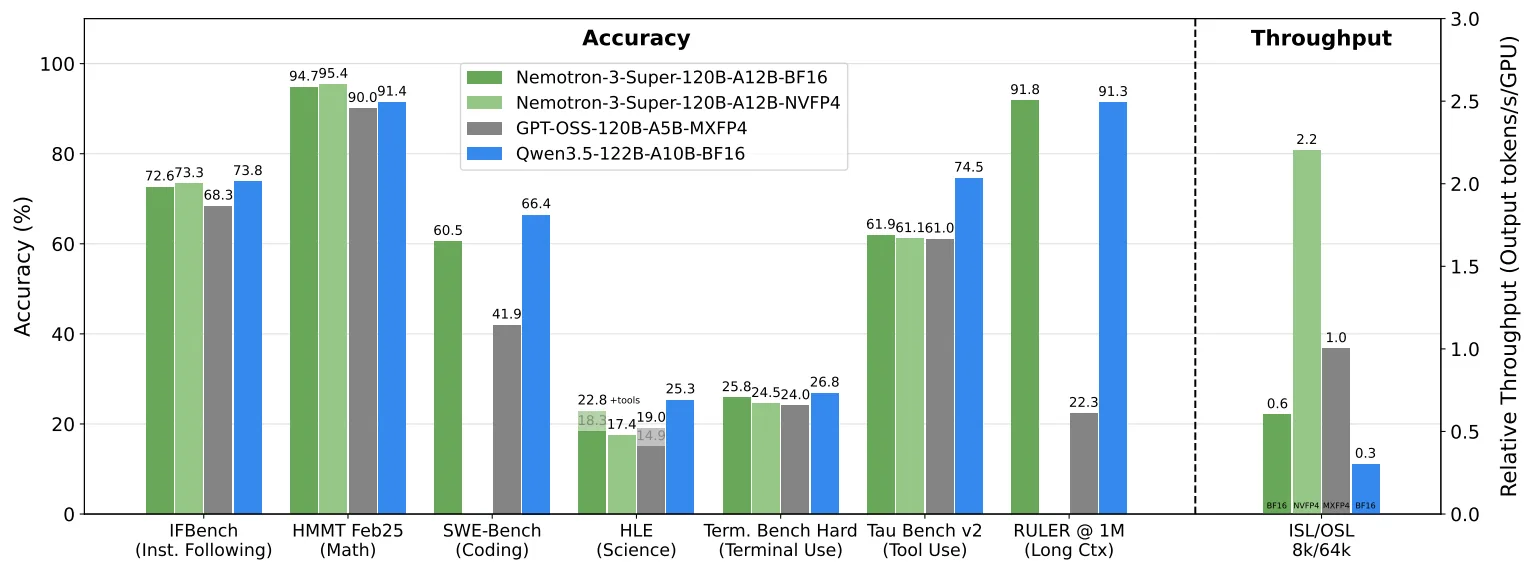

Nvidia’s Nemotron 3 Super did not land with the usual frontier-model fireworks, but the most interesting case for it has little to do with bragging rights on the hardest evals. The Signalbloom write-up makes a persuasive argument that this 120B-A12B model matters because it is unusually open by current standards, ships with a huge amount of accompanying training data and evaluation detail, and was designed to stay efficient even in 4-bit form.

The numbers that stood out to me were practical ones. Signalbloom notes that the NVFP4 version retains roughly 99.8% of the full-precision score on MMLU and RULER, reports about 62 tokens per second at a 512k context window on a single RTX Pro 6000, and says hosted providers are already pushing 400+ tokens per second. That is exactly the kind of profile that makes a model attractive for agent workloads: not necessarily the smartest in the room, but cheap, fast, and stable enough to carry a lot of real work. The one clear caveat is licensing, which still looks more permissive than before but not clean enough to ignore.

Nvidia’s Nemotron 3 Super is a Bigger Deal Than You Think

A detailed analysis of why Nemotron matters less as a benchmark champion and more as a fast, open-ish, low-cost model for agent systems.

🔧Tools: OpenClaw-RL is an interesting bet on training agents from normal use instead of carefully prepared datasets

Gen-Verse/OpenClaw-RL is one of the clearest examples I have seen of people trying to move reinforcement learning closer to real workflows. The repo describes a fully asynchronous training loop that wraps a self-hosted model behind an OpenAI-compatible API, intercepts live multi-turn conversations, and keeps optimizing the policy in the background while you continue using it.

What makes it more than a toy is the breadth of the setup. The project now supports local GPU and cloud deployment, includes LoRA training, and explicitly targets terminal, GUI, SWE, and tool-call settings instead of staying trapped in a narrow benchmark box. It also supports multiple learning styles: binary feedback, on-policy distillation from richer textual corrections, and a combined method. Whether this becomes the default way people personalize agents is still an open question, but the core idea is powerful: your ordinary usage can become the training pipeline.

An asynchronous RL framework for personalized and general-purpose agents, built to learn from live conversations and real task environments.

🌐Web: Star Office UI gives agents something most of them still lack: a legible presence

One of the more charming projects this week was ringhyacinth/Star-Office-UI, but it is not just charm. The project turns agent state into a little pixel office where you can see whether an assistant is idle, writing, researching, executing, syncing, or stuck in an error state. It also pulls in recent work notes, supports multiple agents in the same office, offers language switching, mobile viewing, and even an optional desktop-pet style mode.

I like this category a lot because it addresses a real usability problem. Agents often feel opaque even when they are working correctly. You get logs, maybe a terminal, maybe a notification if you’re lucky. A visual state layer is not the deepest technical innovation in the world, but it could be exactly the sort of interface polish that makes long-running agents feel less abstract and easier to trust.

A pixel-art status dashboard for AI assistants, with multi-agent presence, activity states, daily notes, mobile support, and OpenClaw integration.

🤖AI: autoresearch is Karpathy’s compact vision of autonomous model improvement

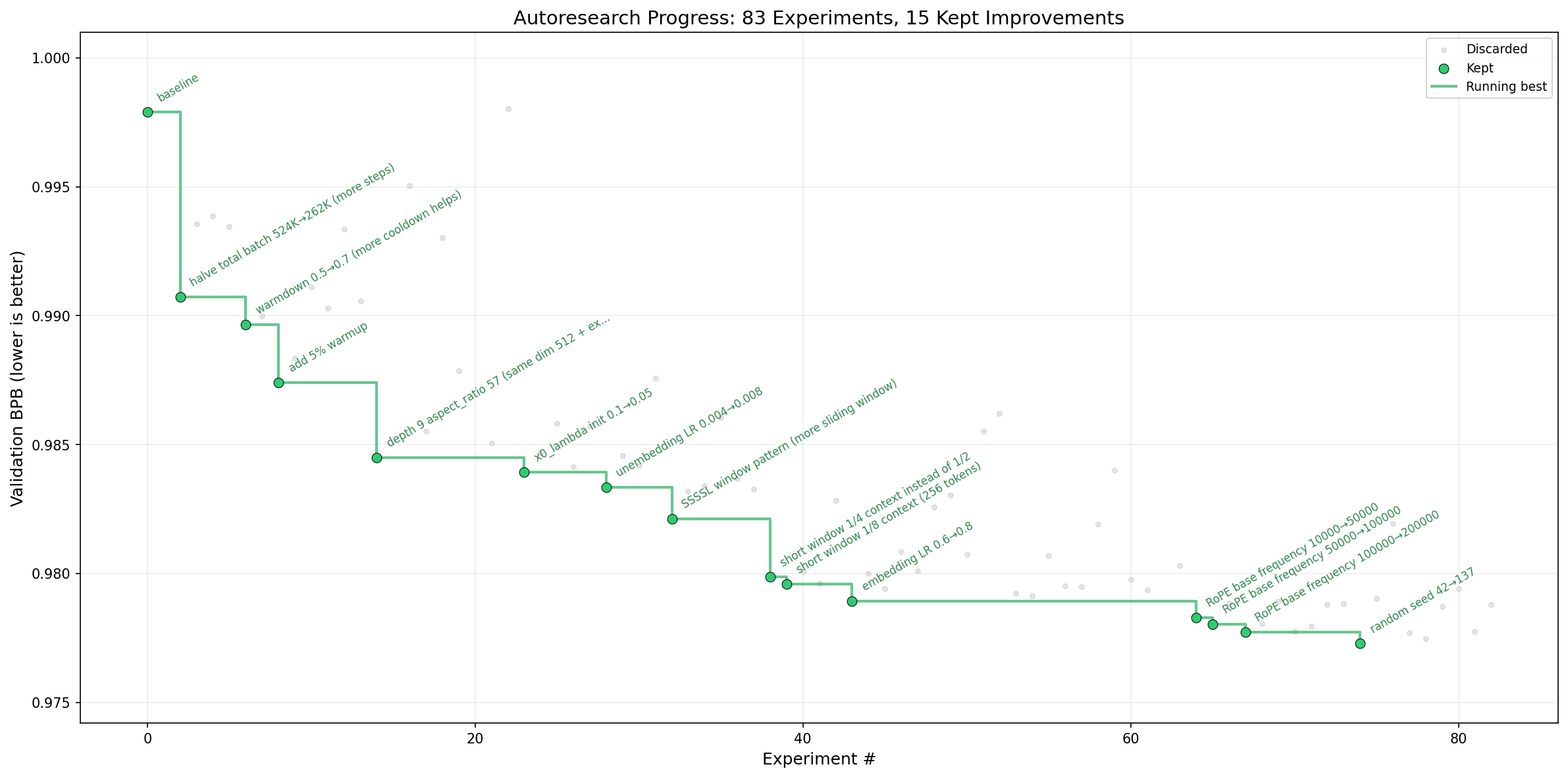

karpathy/autoresearch is a small repo with a big idea: give an agent a contained training setup, let it modify one file, run a five-minute experiment, measure the result, keep the improvement if it worked, and repeat all night. The project is intentionally tiny. The agent edits train.py, the human edits program.md, and the whole system is set up so research progress happens through a loop of small, comparable experiments instead of one giant rewrite.

That constraint is what makes it interesting. Karpathy estimates about 12 experiments per hour and roughly 100 while you sleep, all on a single GPU setup. This is not a general-purpose replacement for human researchers, but it is a useful glimpse of what “autonomous research” could look like when it is kept concrete: narrow scope, fixed budget, measurable outcome, and an agent that learns by iterating rather than by pretending to have a grand plan.

A stripped-down project for letting agents run and evaluate repeated small-scale training experiments automatically on a single GPU.

⚡Quick Hits

open-jarvis/OpenJarvis - Stanford’s OpenJarvis is a serious local-first agent stack: shared primitives, evaluation that treats energy and cost as first-class constraints, and a learning loop based on local trace data rather than default cloud dependence.

github.com

HKUDS/CLI-Anything - A strong expression of the “make software agent-native” thesis, packaging desktop tools behind structured command-line interfaces that are easier for coding agents to inspect and use reliably.

github.com

agentscope-ai/CoPaw - Personal assistant layer that spans multiple chat apps, local or cloud deployment, custom skills, memory, and a new Tool Guard approval layer for risky actions.

github.com

pretyflaco/meetscribe - Fully local meeting capture with dual-channel recording, speaker diarization, WhisperX transcription, Ollama summaries, and polished PDF output. A nice reminder that “AI product” does not have to mean “send all your conversations to the cloud.”

github.com

Have a great week!