Hi there,

This week felt like a reminder that the most interesting progress in AI is often not the model itself, but the surrounding machinery. The new things that stood out were all about turning vague agent demos into something more operational: execution environments that start almost instantly, dashboards that make a swarm of bots manageable, design guidance that pushes outputs past generic templates, and memory setups that do not quietly collapse under their own weight.

📃 In this Monday Morning Mashup:

⭐Highlight: Zeroboot makes isolated agent sandboxes feel closer to function calls than VM boots

🔧Tools: Paperclip turns a pile of coding agents into something that looks more like an org chart

🌐Web: Taste Skill suggests frontend quality is becoming teachable, reusable, and less mystical

🤖AI: The best agent memory stack might be layered, local, and slightly boring on purpose

Have a great week!

⭐Highlight: Zeroboot makes sandboxed execution feel fast enough to disappear into the workflow

zerobootdev/zeroboot is one of those projects where the headline sounds suspicious until you look at the architecture. The repo describes a system that boots a Firecracker VM once, snapshots the loaded runtime, then forks fresh KVM-based sandboxes from that snapshot with copy-on-write memory. The result, according to the published benchmark table, is roughly 0.79ms spawn latency at p50, 1.74ms at p99, and only about 265KB of memory per sandbox.

What makes that interesting is not just the benchmark flex. If those numbers hold up in real workloads, they change the economics of running agents and serverless-style tasks with much stronger isolation than a normal container. The caveats matter too: the repo still calls itself a working prototype, networking is not implemented inside forks yet, and each fork is single-vCPU only. Still, this is one of the clearer examples I have seen of the agent tooling stack getting meaningfully more serious.

An open-source sandbox runtime that uses Firecracker snapshots and copy-on-write forking to launch isolated KVM VMs in under a millisecond.

🔧Tools: Paperclip wants to be the management layer for autonomous companies, not just another coding shell

paperclipai/paperclip takes a bigger swing than most agent dashboards. The pitch is straightforward: if OpenClaw or Codex behaves like a worker, Paperclip should behave like the company around that worker. The project pairs a Node.js backend with a React UI and adds the organizational scaffolding that most people currently fake with terminals, folders, and memory alone: org charts, goal alignment, ticketing, budgets, governance, and scheduled heartbeats.

I like this idea because it tackles a real coordination problem. Plenty of people can already spin up one capable agent. The hard part is managing ten of them without losing context, accountability, or cost control. Paperclip is very ambitious, and ambition always carries some hand-wavy product language, but the repo is concrete enough to take seriously. At roughly 31k stars already, it feels less like a toy dashboard and more like an early attempt at an operating system for agent-heavy work.

An orchestration layer for multi-agent businesses, with dashboards for budgets, roles, ticketing, governance, and long-running work.

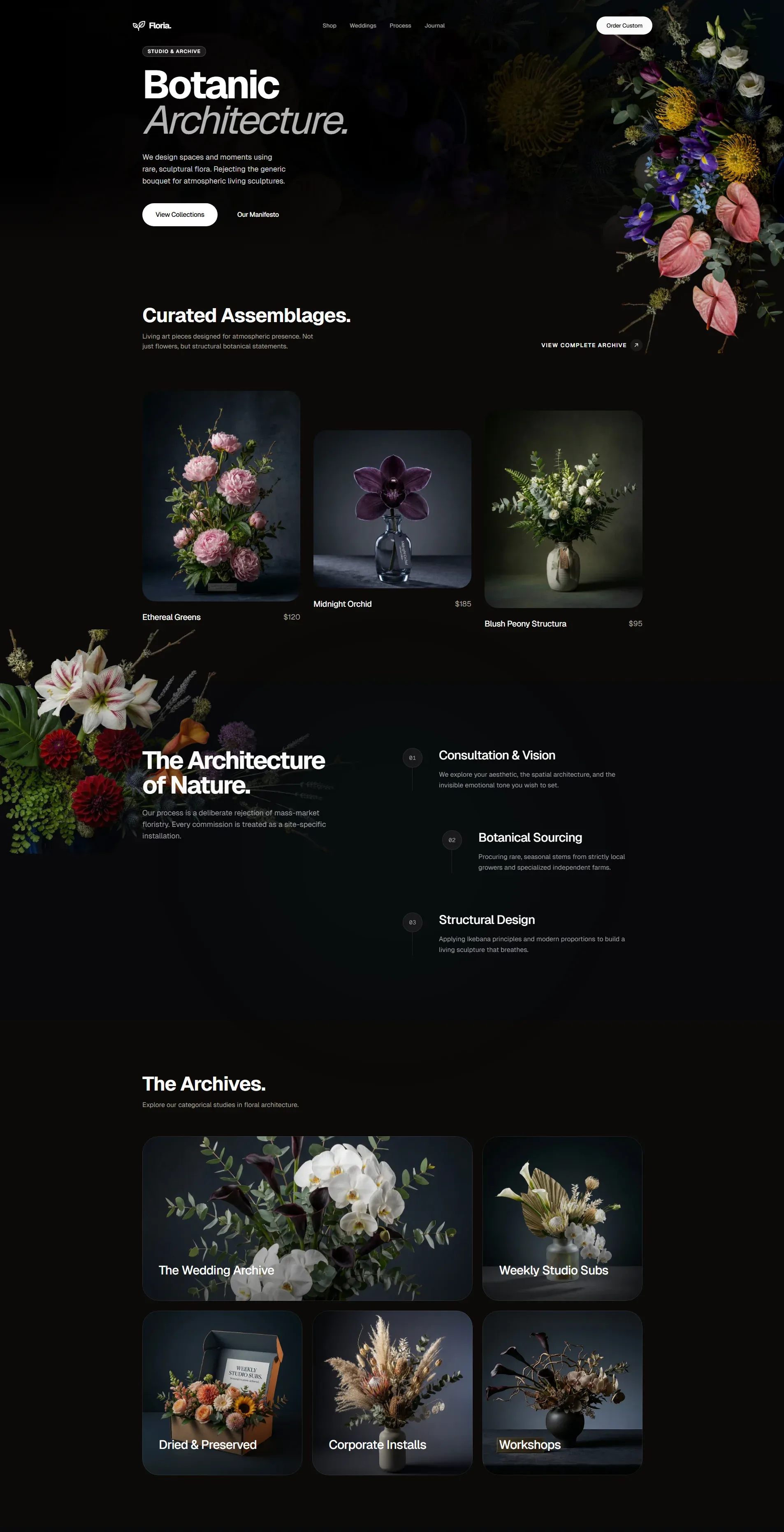

🌐Web: Taste Skill is a good sign that frontend quality is becoming more programmable

One of the more revealing projects this week was Leonxlnx/taste-skill. At first glance it looks like prompt packaging, and in a sense it is, but that undersells what is happening here. The repo turns aesthetic judgment into a reusable artifact for coding agents: layout preferences, spacing discipline, motion rules, typography decisions, and even adjustable knobs for design variance, motion intensity, and visual density. It is basically a thesis that “good taste” can be operationalized.

That idea lines up neatly with OpenAI’s new GPT-5.4 frontend guide, which argues that better UI output comes from explicit constraints, visual references, real content, and iterative browser-based verification. Put those together and you get a broader pattern: frontend quality is becoming less about hoping the model vibes its way into something good, and more about giving it a strong system for making design decisions. That is a meaningful shift, especially for people building software with small teams and very little formal design support.

A reusable skill pack for AI coding agents that pushes frontend output toward more opinionated layouts, better spacing, stronger typography, and more polished motion.

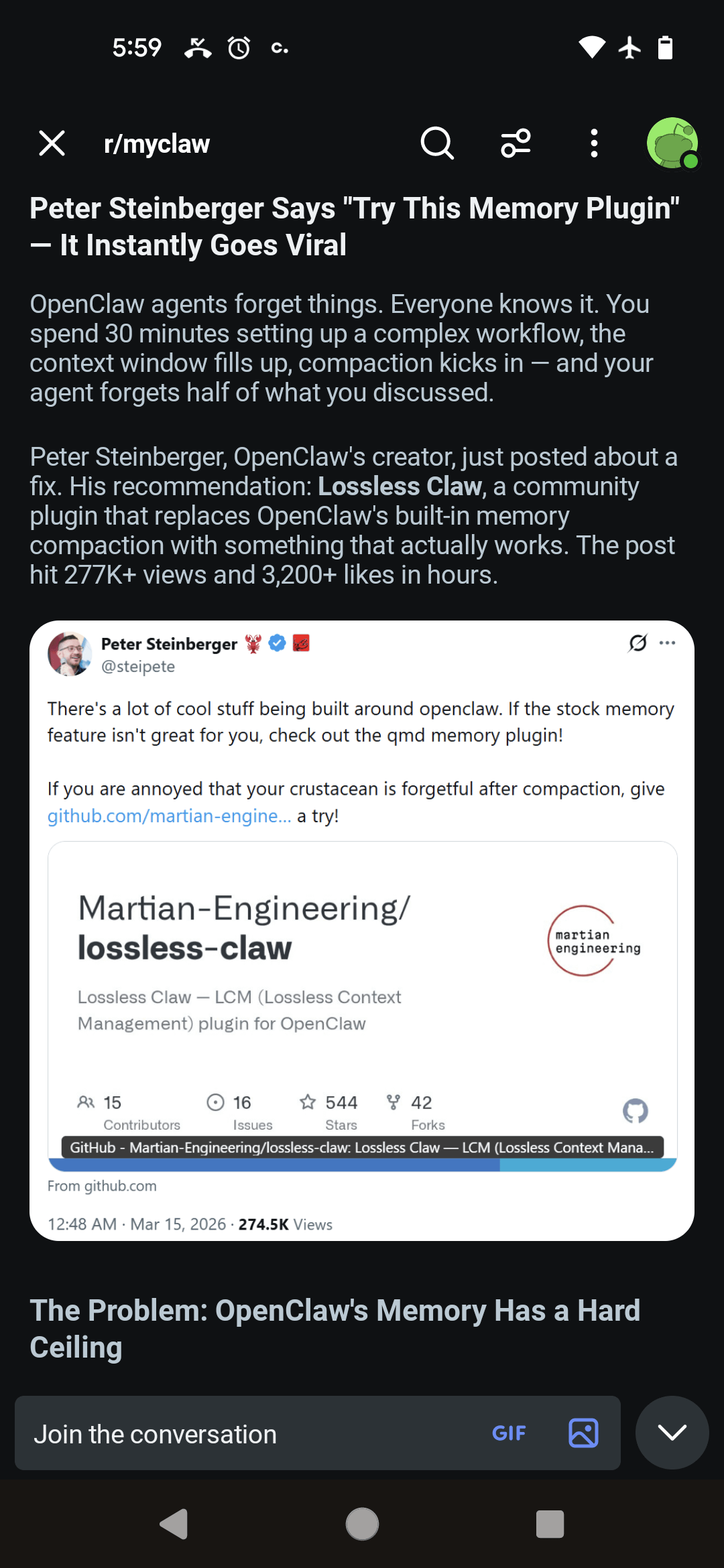

🤖AI: The most convincing memory advice I saw this week was to stop looking for one magic memory system

Aswin’s write-up on OpenClaw memory plugins is useful because it is not trying to sell a universal answer. The central warning is simple: plain markdown memory feels nice at first because it is local and readable, but it becomes a tax as logs swell and the agent keeps re-reading old context on every turn. The piece walks through the trade-offs of alternatives like Mem0, LanceDB, knowledge graphs, and SQLite, and lands on a view that feels much more practical than “just add vector search to everything.”

The recommendation that stuck with me was the layered stack: markdown or Obsidian for human-readable rules, QMD for targeted retrieval without loading the entire memory into the context window, and SQLite for structured data that needs to stay accurate. That is not the flashiest setup, but it sounds right. A lot of the current memory conversation still treats memory as one monolithic feature when it probably ought to be several separate systems with different jobs.

I Tested EVERY OpenClaw Memory Plugin (Most are Trash)

A grounded comparison of popular agent memory approaches, with a strong case for using separate layers for readable rules, cheap retrieval, and structured state.

⚡Quick Hits

Designing delightful frontends with GPT-5.4 - OpenAI’s new frontend guide is most useful not as a model promo, but as a practical checklist: tighter constraints, real content, visual references, and browser-based verification all produce noticeably better results.

developers.openai.com

ace-agent/ace - ACE is an interesting attempt to make agent improvement incremental instead of brittle, using generator, reflector, and curator roles to update a context “playbook” over time while claiming better accuracy and much lower adaptation latency.

github.com

Have a great week!