Hi there,

The pattern this week was not a single breakthrough so much as a slow refactor of how we work with agents. Specifications are creeping back to the center of the workflow, the “skill” has quietly become a real packaging unit, agents are starting to learn from the corrections you were already typing, and a 1-bit model finally feels good enough to actually use. Less hype, more plumbing - which is usually when interesting things start to land.

📃 In this Monday Morning Mashup:

⭐Highlight: GitHub’s Spec Kit pushes coding agents back toward written specifications

🤖AI: MiniMax open-sources the skill library that runs its own agent

🌐Web: Princeton turns the “no, that’s not what I meant” reflex into a training signal

🔧Tools: A 1-bit Bonsai model that actually behaves like a usable LLM

Have a great week!

⭐Highlight: Spec Kit makes specifications the actual artifact, not the throwaway scaffolding

The clearest signal that “vibe coding” has peaked is that GitHub itself is now shipping a toolkit aimed squarely at the opposite habit. github/spec-kit is an open source CLI for what they call Spec-Driven Development: instead of letting the agent invent intent on the fly, you write an executable specification first, and the implementation is generated from it. The repo is around 89k stars and integrates with Copilot, Cursor, Codex, and other coding agents through a simple specify init flow.

The interesting framing in the README is that for decades specifications were treated as scaffolding to throw away once “real coding” started. Spec Kit flips that, so the spec becomes the source of truth and the code is the build artifact. That sounds academic until you have lived through a multi-agent project where every session reinterprets the same task slightly differently. Pinning intent in one place that humans and agents both read makes a lot of those problems disappear.

An open source toolkit for spec-driven development that turns your written specification into the executable source of truth, with first-class integrations for Copilot, Cursor, Codex and other AI coding agents.

🤖AI: MiniMax quietly publishes the skill library that powers its own agent

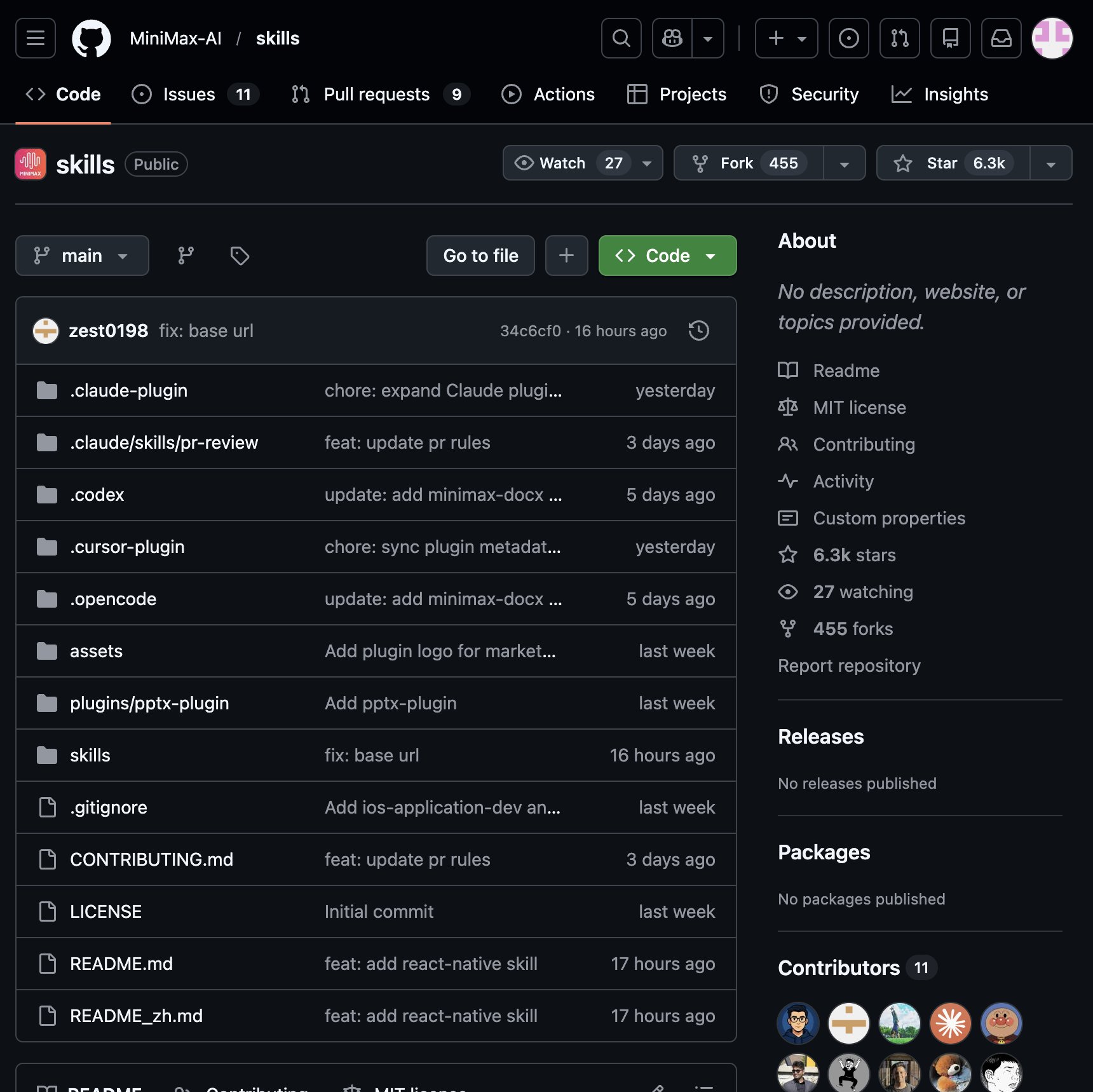

The other big shift in the agent stack this week was MiniMax open sourcing MiniMax-AI/skills, the actual skill collection that drives their hosted agent. The repo bundles ready-made skills for editing PDF, Word, Excel, and PowerPoint files, generating posters and GIFs, running full Playwright browser flows, deploying MCP servers, and handling typical enterprise document workflows. Skills load automatically into the agent context, which removes the usual “which skill do I attach today” friction that breaks most homemade setups.

What makes this matter beyond MiniMax users is the plug-in design. The skills are framework-agnostic, so they work just as well inside Claude Code, Codex, OpenClaw, or any other harness that understands the skills format. That accelerates a quiet trend: instead of every agent having a bespoke tool registry, capabilities are starting to look like packages you install. The repo is still in beta, but it is one of the more concrete steps toward an actually shared agent ecosystem.

The open source skills library that runs MiniMax’s own agent: Office file editing, browser automation, MCP server deployment, and creative generation, designed to plug into any major agent framework.

🌐Web: Princeton turns “no, that’s not what I meant” into the training signal

One of the more underrated ideas this week came out of Princeton’s OpenClaw RL work. Their argument is that every time you re-ask a question, correct an answer, or rephrase your last message, you are giving the model a high-quality reward signal that today’s systems just throw away. Their setup watches conversational dynamics and treats a smooth follow-up as success and a re-ask as failure, then updates the model in place during the conversation - no engineers, no retraining job, no downtime.

The reported numbers are striking. A general assistant moved from a personalization score of 0.17 to 0.81 in 36 conversations, and a grading assistant got noticeably warmer and more detailed feedback after 24 interactions. Even with the usual caveats about narrow benchmarks, this points at a real direction: the next generation of useful agents will probably not be the ones with the biggest base model, but the ones that quietly fold your daily friction back into their behavior.

Princeton’s OpenClaw RL: AI that learns from your corrections

A research thread on a system that uses live conversational signals - re-asks, rephrasings, corrections - to personalize an assistant in roughly 36 conversations, with no retraining loop in the middle.

🔧Tools: A 1-bit model that finally crosses the “actually usable” line

For years 1-bit LLMs felt like a research curiosity. Microsoft’s BitNet runs were interesting on paper but unpleasant in practice. PrismML’s new Bonsai series seems to break that pattern. The community write-up from Tim at AnythingLLM tested the 8B Bonsai on a 48GB M4 Max and reported genuinely usable behavior on chat, document summarization, tool calling, and web search - not the brittle outputs people came to expect from extreme quantization.

The catch is the runtime story. Bonsai ships as GGUF but needs a custom llama.cpp fork because mainline does not implement the 1-bit operations yet, and that fork is somewhat behind upstream. Memory pressure, on the other hand, is dramatically lower than a comparable 4-bit Qwen, which is the whole point. If a few more model families adopt this approach, the practical floor for “useful local model on a phone or old laptop” drops a lot, and the assumption that you need a fresh GPU for every new release starts to look dated.

The Bonsai 1-bit models are very good

A practical hands-on report from a developer running Bonsai 8B on a MacBook, with notes on real workloads, memory pressure, and the awkward custom llama.cpp fork it currently needs.

⚡Quick Hits

remorses/playwriter - A Chrome extension and CLI that lets coding agents drive your already-logged-in browser with full Playwright snippets, instead of spawning a fresh, cookie-less Chrome that bot detectors flag instantly. Around 3.4k stars and unusually useful as an MCP for daily agent work.

github.com

millionco/expect - A skill that reads your git diff, generates a test plan, and runs it in a real browser via Playwright, then hands the failures back to your agent to fix. Less script writing, more “QA superpowers” wired straight into Claude Code, Codex, or Cursor.

github.com

run-llama/liteparse - LlamaIndex’s new local PDF parser focused on fast spatial text extraction with bounding boxes, optional OCR, and screenshot generation for LLM agents. Useful as the boring, dependable layer under more glamorous document pipelines.

github.com

lightclaw - A single-binary, local-first AI agent written in Rust. Tom Doerr’s pick this week, and a good example of the “small, ship-as-one-file” trend in personal agents.

x.com

NOMAD - A self-hosted, real-time collaborative travel planner. A nice change of pace from the usual agent-on-agent content.

x.com

animaworks - An autonomous AI agent organization framework, in the same spirit as last week’s Paperclip but smaller and earlier.

x.com

Have a great week!