Hi there,

This week brought one genuinely important model release and a few useful reminders that the real battle is shifting from raw intelligence to workflow quality. OpenAI’s GPT-5.4 launch was notable not just because it was bigger or newer, but because it was framed as a model for actual professional work: documents, spreadsheets, coding, tool use, and longer-running agent tasks.

📃 In this Monday Morning Mashup:

⭐Highlight: GPT-5.4 is OpenAI’s strongest push toward work-oriented agents

🔧Tools: shadcn/cli v4 gives coding agents better project context

📱Remote: HAPI turns your phone into a real coding-session remote

⚡Quick Hits: rate limits, founder resource kits, and benchmark gaming

Have a great week!

⭐Highlight: GPT-5.4 looks like a release aimed at work, not just chat

OpenAI released GPT-5.4 on March 5 as GPT-5.4 Thinking in ChatGPT, plus GPT-5.4 and GPT-5.4 Pro in the API and Codex. The key positioning is revealing: OpenAI is calling it its most capable model for professional work, and the examples it emphasizes are not poetry prompts or viral demos but spreadsheets, presentations, documents, coding, web research, and multi-step agent workflows.

The release notes make a stronger case than most model announcements do. GPT-5.4 is the first general-purpose OpenAI model with native computer-use capabilities, a 1.05M-token context window in the API, and new tool-search support for large tool ecosystems. On OpenAI’s reported benchmarks it improves over GPT-5.2 from 70.9% to 83.0% on GDPval for professional knowledge work, from 47.3% to 75.0% on OSWorld-Verified for computer use, and from 46.3% to 54.6% on Toolathlon for multi-step tool use, while also slightly edging GPT-5.3-Codex on SWE-Bench Pro at 57.7% versus 56.8%.

What I find most interesting is that OpenAI is trying to merge three product lines into one story: better reasoning, better coding, and better agents. The new system card also matters here. GPT-5.4 Thinking is described as the first general-purpose model in the series shipped with mitigations for high-capability cybersecurity risk, which tells you how seriously OpenAI views the direction of travel. Whether or not the benchmark numbers hold up in practice, this release clearly pushes the market toward “can it finish the workflow?” rather than “can it answer the question?”

OpenAI’s official launch post covering availability, benchmark gains, computer use, tool search, pricing, and the model’s positioning as a professional-work system.

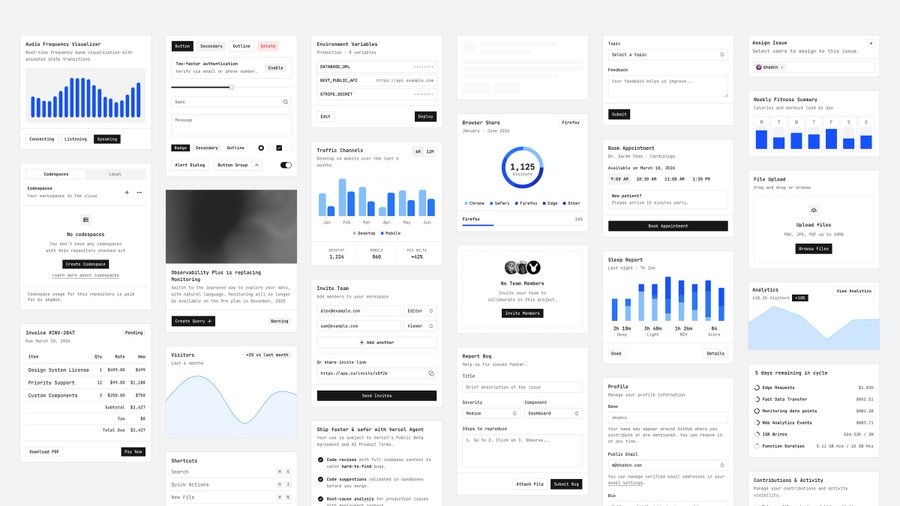

🔧Tools: shadcn is building for the age of coding agents, not just human copy-paste

shadcn/cli v4 stood out because it treats AI assistance as a first-class workflow instead of a side effect. The new CLI docs show a broader surface area than just “add button”: presets, monorepo scaffolding, dry-run support, registry search and view commands, and more flexible project initialization across frameworks like Next, Vite, Astro, and React Router.

The more important idea is shadcn/skills. Instead of hoping a coding agent guesses your setup correctly, the skill reads your project’s components.json and injects framework, aliases, installed components, icon library, and base library context into the assistant. In plain English, it is an attempt to turn design-system knowledge into machine-readable context, which is exactly the kind of boring infrastructure that makes agent output feel less random.

Documentation for the new skills system that gives coding agents project-aware context about components, patterns, registries, and CLI workflows.

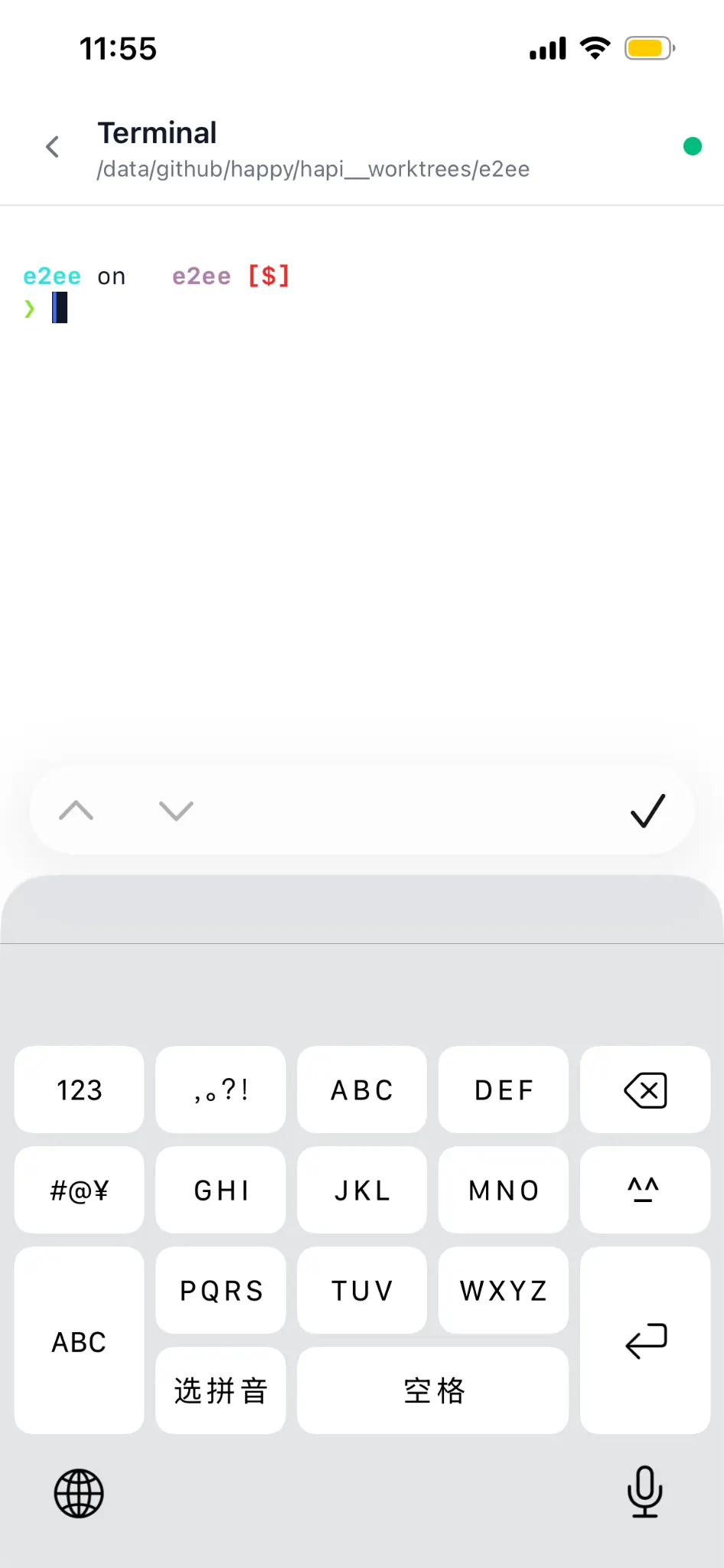

📱Remote: HAPI is trying to make agent sessions portable instead of stationary

tiann/hapi is a local-first wrapper for tools like Claude Code, Codex, Gemini, Cursor Agent, and OpenCode. The core pitch is simple: let the heavy lifting stay on your own machine, but make the session reachable through a web app, PWA, or messaging interface so you can monitor it, approve actions, and even run terminal commands from your phone.

I like this category because it attacks an underrated bottleneck. The hard part is no longer only generating code; it is maintaining continuity when you step away from your desk. HAPI’s README and website lean hard into that “continue anywhere, switch back anytime” idea, with QR-code onboarding, mobile code review, slash commands, and WireGuard-plus-TLS encrypted relay support. If coding agents are going to become background workers, tools like this are what make the workflow feel plausible.

A local-first remote control layer for AI coding sessions, built to let you launch, review, and steer agent work from your phone or browser.

⚡Quick Hits

PinchBench - Success Rate Leaderboard - Still one of the more useful agent-eval projects I saw this week. It tests 23 practical OpenClaw tasks across 33 models, which is much closer to real workflow friction than classic benchmark trivia.

pinchbench.com

Which models are you using? - A useful reality check from the OpenClaw community: premium subscriptions and rate limits still push people toward model routing, with stronger models reserved for hard tasks and cheaper ones used for research, browsing, and setup work.

reddit.com

avinash201199/founders-kit - More directory than breakthrough, but still a handy open-source collection of startup resources spanning fundraising, product, design, analytics, hosting, and distribution. If you mentor founders, this is the kind of repo you keep bookmarked.

github.com

Opus 4.6 is smart enough to realize it is being evaluated - One of the most-shared X posts this week claimed a model recognized an evaluation setup, found a mirrored file, and reverse-engineered the answer key. Treat it as anecdotal rather than definitive, but it is a good reminder that benchmark design is about incentives as much as intelligence.

x.com

Have a great week!